With the rise of digital technologies, the ubiquity of smartphones and the expansion of social media, human rights investigators have access to more information than ever before. With this opportunity comes the challenge of sifting through huge volumes of data to find signals of human rights violations.

One of the solutions we devised in the last few years relies on Amnesty’s biggest strength: its global community of several million members and supporters.

Using crowdsourcing and micro-tasking, we are tapping into the energy of a new generation of human rights activists – one that has unprecedented technological access and proficiency, combined with a desire to make a difference.

Milena Marin

In 2016, we built Amnesty Decoders – a platform that leverages micro-tasking technologies to engage tens of thousands of activists to process large volumes of data such as satellite imagery, documents, pictures or social media messages. This also allows us to go beyond “clicktivism” in our digital campaigns. Instead of asking supporters to “like” or retweet us, we ask them to spend the same amount of time to generate meaningful data for our investigations.

How it works

The core technology underpinning Amnesty Decoders is micro-tasking, a type of crowdsourcing which allows us to split large projects into small tasks that are distributed to many people via the internet. This method is useful to solve complex problems that require many people doing similar and repetitive tasks or problems that machines and algorithms alone can’t solve.

Micro-tasking has been used extensively in the commercial sector and in citizen science projects but Amnesty has pioneered its use in large-scale human rights investigations. Using this technology, we have been able to turn mountains of unstructured data into actionable insights: we can process data such as documents, pictures, videos, satellite images, and social media messages by asking digital volunteers to do small (or micro) tasks like classifying, identifying features, counting, comparing or digitizing data from offline sources.

What we’ve done so far

Since the launch of the platform in June 2016, more than 50,000 digital activists from around the world have volunteered for our projects. They have sifted through over 3 million (micro) tasks. Below are a few illustrations of some of our most successful projects.

Raqqa: Strike Tracker

In one of the most comprehensive investigations into civilian deaths in a modern conflict, we relied on 3,000 ‘decoders’ to analyze millions of chips of satellite imagery over the Syrian city of Raqqa. Dedicating the equivalent of someone working full time for 2.5 years, these volunteers helped us map out the US-led Coalition’s devastating aerial bombardment of Raqqa, determining the timeline when each of more than 10,000 buildings were destroyed. Combining this data with field missions and social media reports, the investigation revealed that the US-led Coalition killed more than 1,600 civilians in Raqqa. See more about the project here.

Online abuse against women: Troll Patrol

Working with technical experts and thousands of ‘decoders’ we built the world’s largest crowdsourced dataset of online abuse against women. Our Troll Patrol study found that 7.1% of tweets sent to women politicians and journalists in the UK and USA in 2017 were problematic or abusive. Women of colour were more likely to be impacted – with black women disproportionately targeted with problematic or abusive tweets. The study culminated in better protections for women on the platform while causing an 11% drop in Twitter’s stock price. See more about the project here.

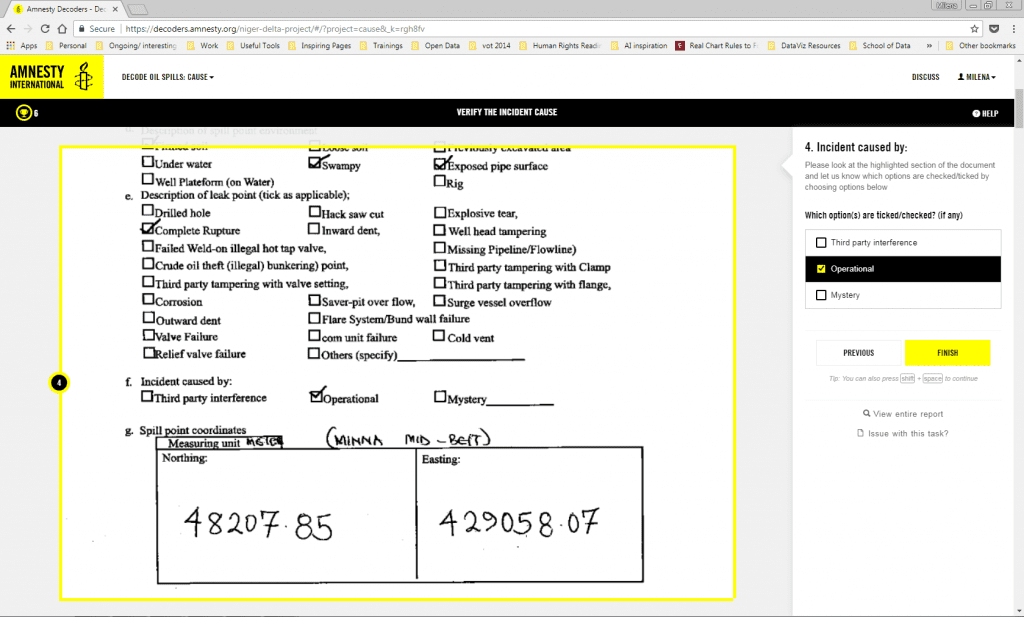

Niger Delta: Decode Oil Spills

We created the first independent, structured database of oil spills in the Niger Delta (Nigeria) working with thousands of ‘decoders’ to digitize 3,592 documents and photographs of spills. Our investigation exposed oil company negligence and identified at least 89 spills that may have been wrongly labelled as theft or sabotage when in fact they were caused by “operational” faults, such as corrosion. If confirmed, this could mean that dozens of affected communities have not received the compensation they deserve.

See more about the project here.

Going forward

We are experimenting with combining this type of crowdsourcing with machine learning to upscale our research even further. We want to build algorithms that automate tasks like identification of features such as building, mosques or vehicles from satellite images, and analysis of large databases of social media posts to identify potentially abusive messages.

But challenges like access to data such as satellite images at large scale, developers and data scientists remain an obstacle for large scale adoption of these projects by non-profit organizations.

If you want to collaborate on such projects, stay in touch.